February 4, 2026

Lightningbeam: The beginnings

Welcome to the Lightningbeam development blog! This is where I'll be posting updates on the project, sharing progress, and writing about the technical challenges I encounter.

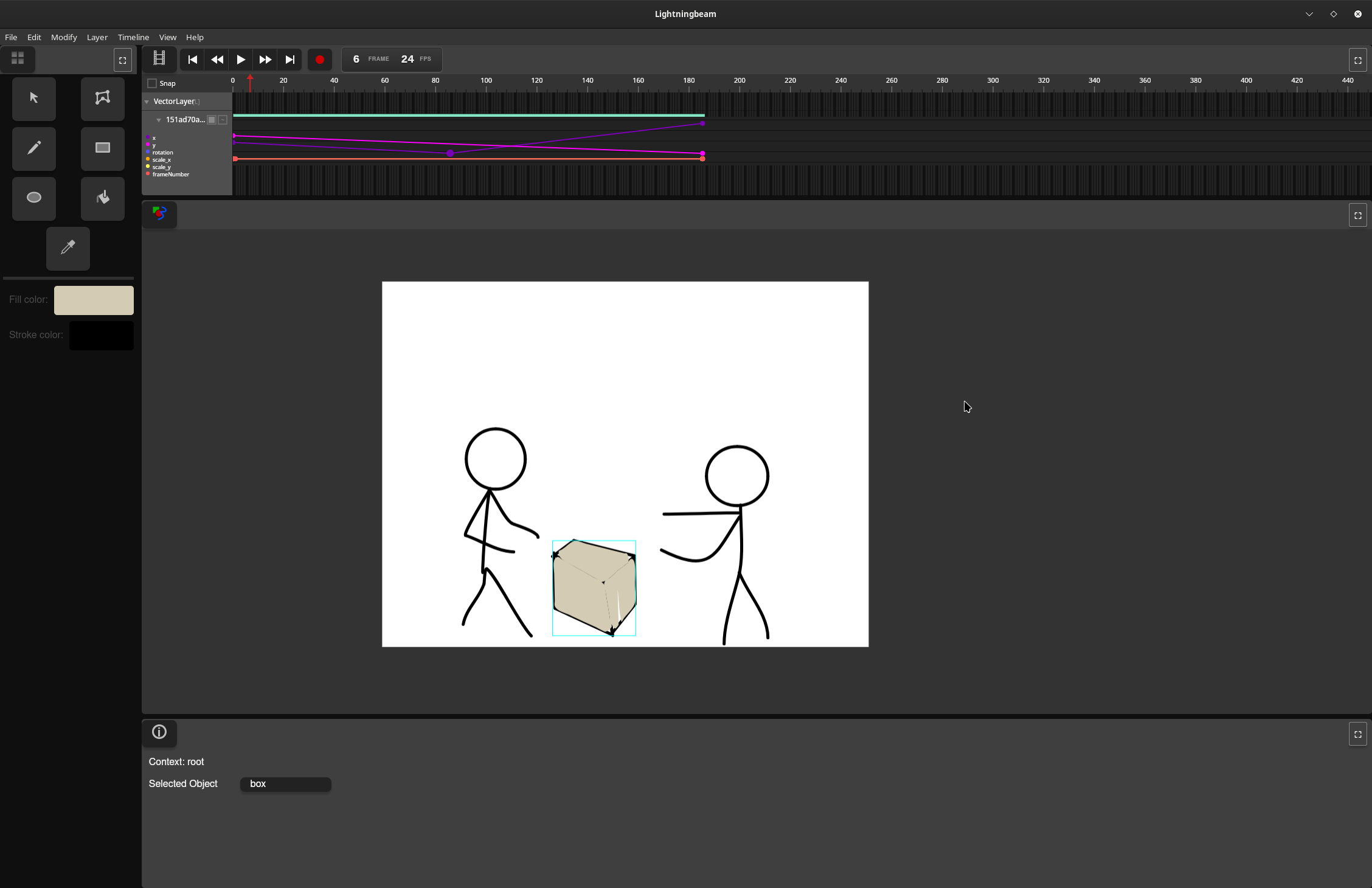

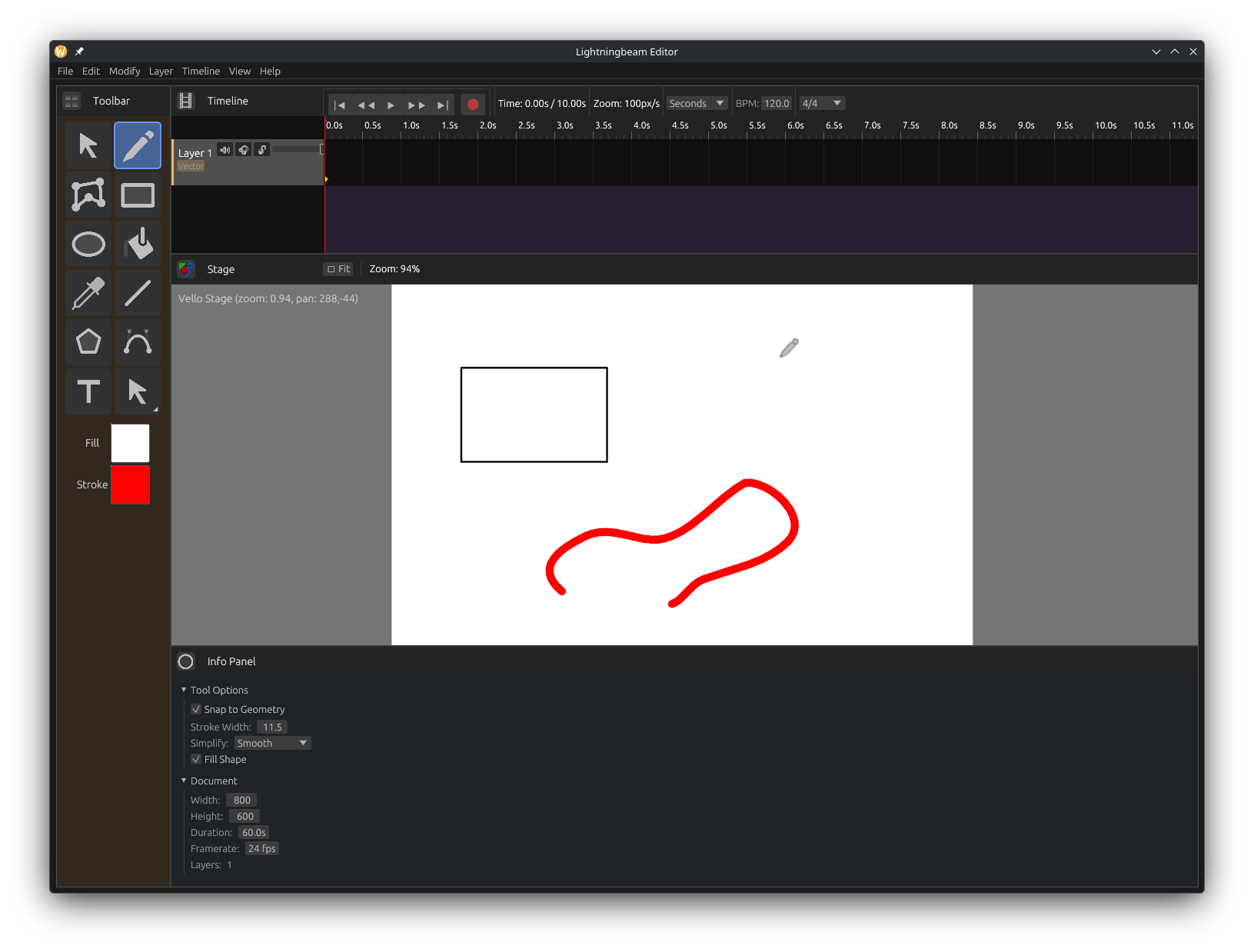

Lightningbeam is an integrated multimedia editor combining vector animation, audio production, and video editing in a single application. It's been a long road to get here, and it's gone through several changes along the way. It started, as many projects do, with a simple itch to scratch.

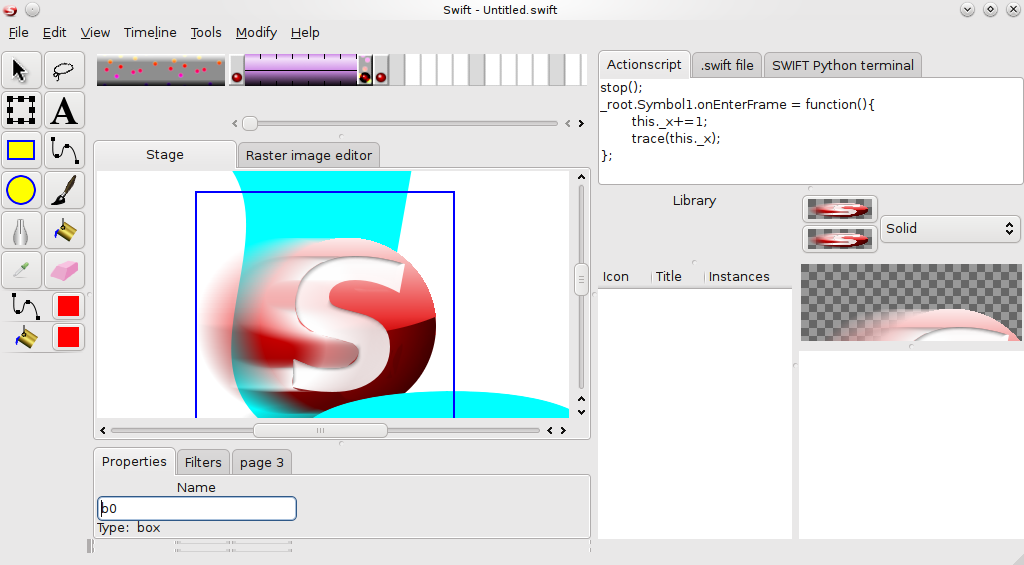

Swift

Back in April 2010, I wanted to make a simple Flash app - a dial which rotated in response to JavaScript input. I wanted to use Flash because I didn't know how to rotate things in JavaScript, and most solutions I found either used Flash or a third-party library - and Flash was what I had grown up using, so I was comfortable with it. I had just gotten the first computer of my own, and Flash was well outside my budget of $0. I assumed that the FOSS world would have a replacement for Flash, the same way there's Gimp for Photoshop, LibreOffice for Microsoft Office and so on. I was surprised to learn that there was no such program.

I did some more research and found that, although there was no graphical program to do so, there was a text-based one called SWFC. I did some experimenting with it and found that yes, it worked quite well. But it wasn't very easy to use. So, I figured, "Well, I'll just write a GUI for swfc then. How hard could it be?"

I had never written a desktop program before; I had only written scripts for use in Flash or Blender. Because Python was what I was familiar with, and because I was using only Ubuntu at the time, I found an application development environment called Quickly and decided to use it. I wrote an initial GUI, learning PyGTK in the process, and over the course of a year and a half I rewrote it to use Cairo and other speed enhancements. I called it Swift, as a play on the .swf format.

Swift was able to export .swf files, and even handle Actionscript. The way SWFC handled Actionscript was a bit confusing, since it supported both Actionscript 2 and 3 with no way to specify which, so in areas where they conflicted you just had to guess which version's syntax to use.

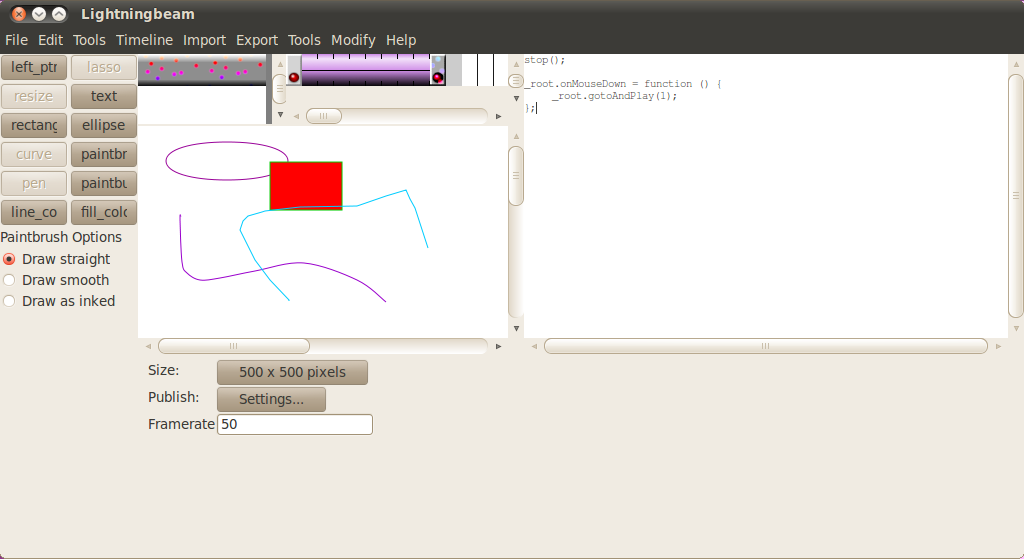

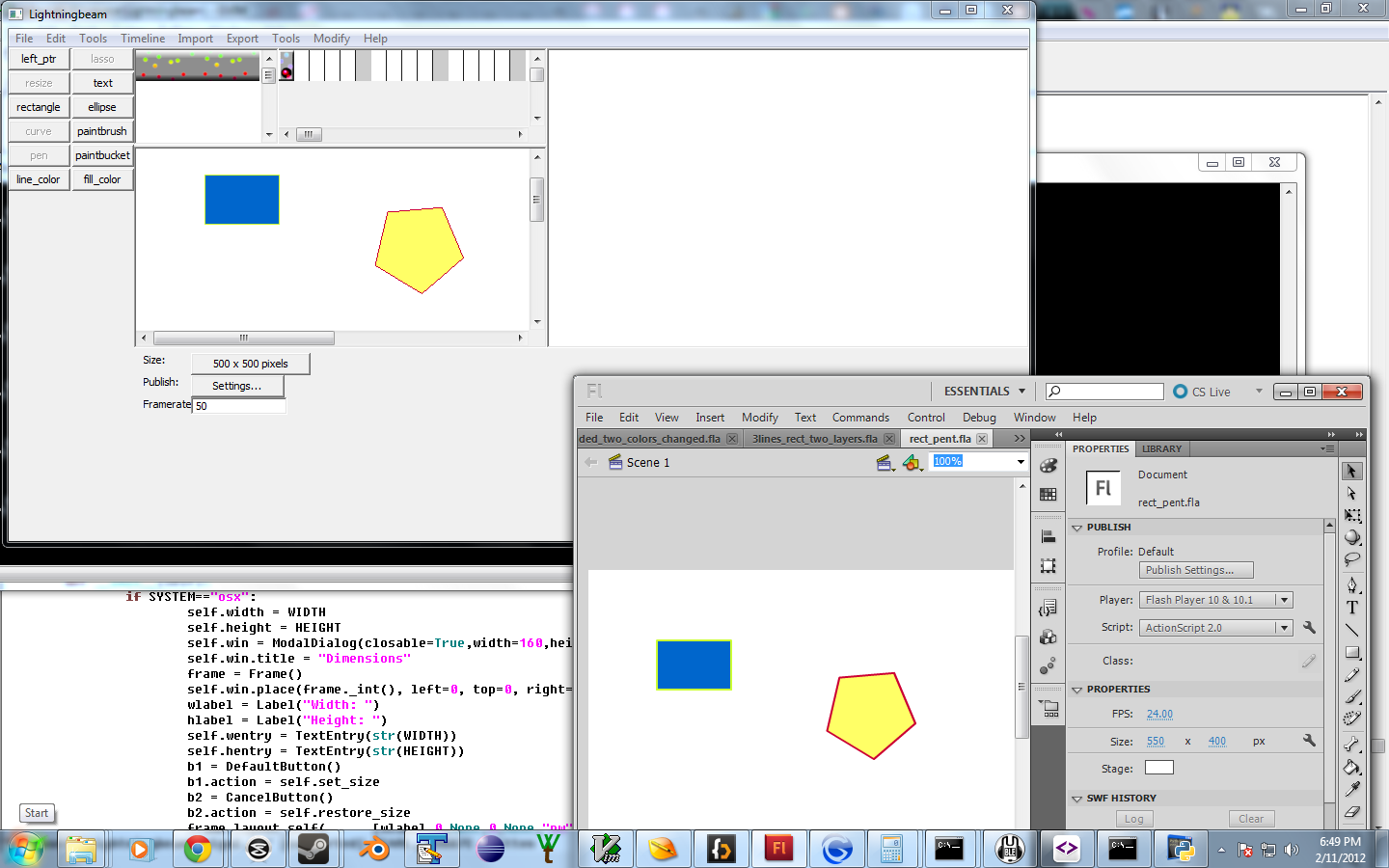

New name, new code

By 2012, I had more programming experience, and was realizing the limits of my current approach. The biggest problem I had was that Swift was highly dependent on GTK2, which was very difficult to build or distribute on Windows or Mac OS X, and was already outdated on Linux as major distros like Ubuntu and Fedora were moving to GTK3. I decided to rewrite the program from the ground up, and made my own GUI library that abstracted over PyGTK on Linux and PyGUI on Windows and Mac. This also allowed me some freedom to experiment with other GUI toolkits such as Pyglet and Kivy, which I was looking into because I kept running into performance problems. (It turns out the performance problems were mostly caused by writing everything in Python.)

I also discovered that Swift was not a great name for a program...because many other people were already using it. Even specifically for Flash tooling. So I had to come up with a new name, which would be more unique in a search engine. As I wrote in my name change announcement: "Lightning is the most dynamic thing encountered in nature, leaping miles in a fraction of a second. Lightning contains enormous amounts of power; the average lightning bolt produces 40 petawatts, or the equivalent of 100 million coal power plants. But lightning is also known for being very hard to control. Thus, the beam indicates a focusing of this energy. It is the goal of Lightningbeam to provide users with this power for creation of animated content."

The other big change was a new output format. HTML5 was more widely supported now, and there were some devices now on the market - notably, the iPhone - which supported HTML5 but did not support Flash. I added an export mode which would compile the project to a big self-contained HTML file, with Actionscript running as Javascript - their syntax is largely the same, so I was able to provide almost full coverage for the language by making a polyfill for all the functions which ActionScript has and JavaScript doesn't.

I also did a bit of work on being able to import Flash .fla files into Lightningbeam - made more difficult by my lack of access to Flash, but helped by someone who goes by DigitalSeraphim. With their help Lightningbeam was able to import simple .fla files, though this feature never got fully fleshed out.

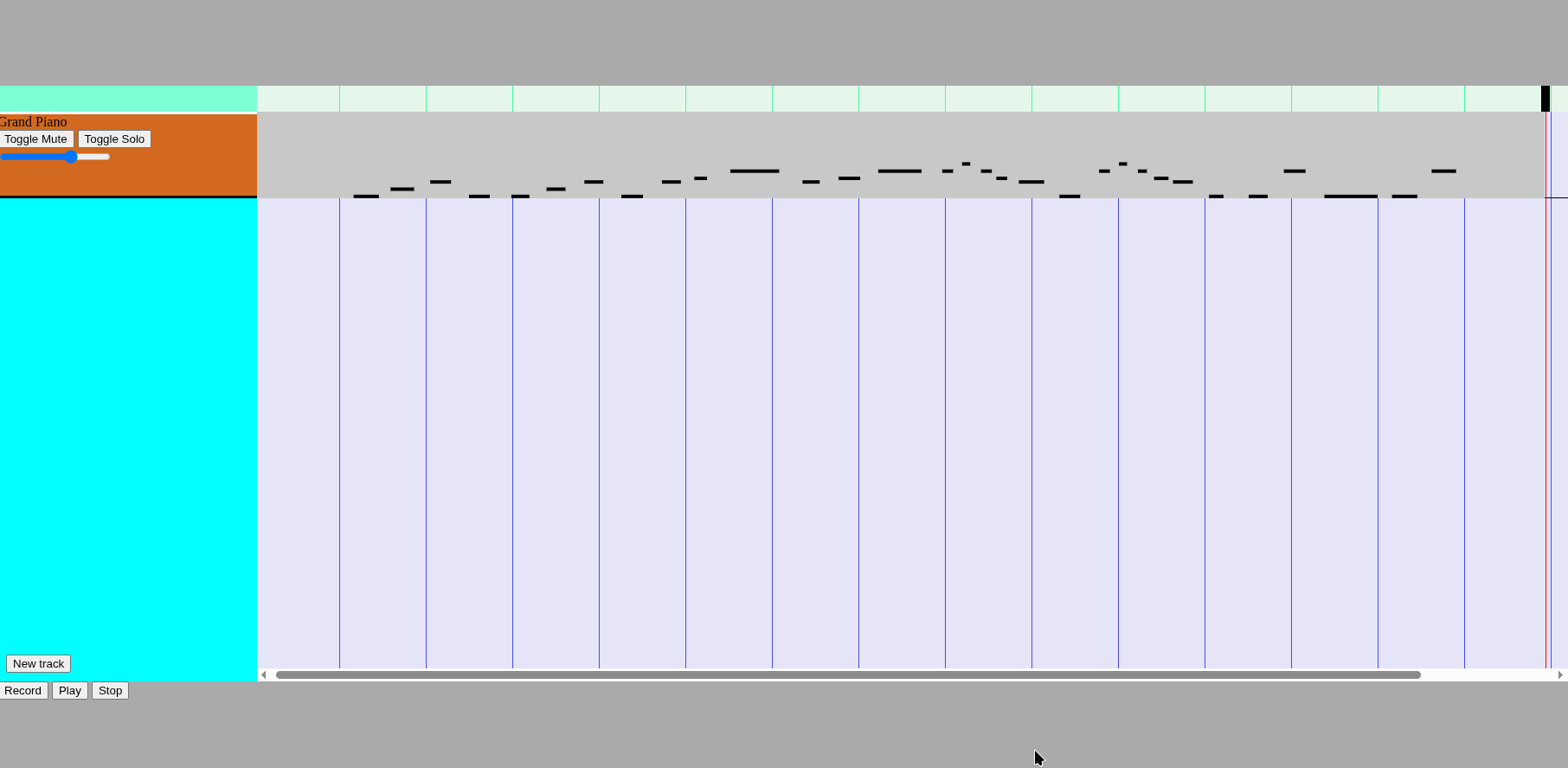

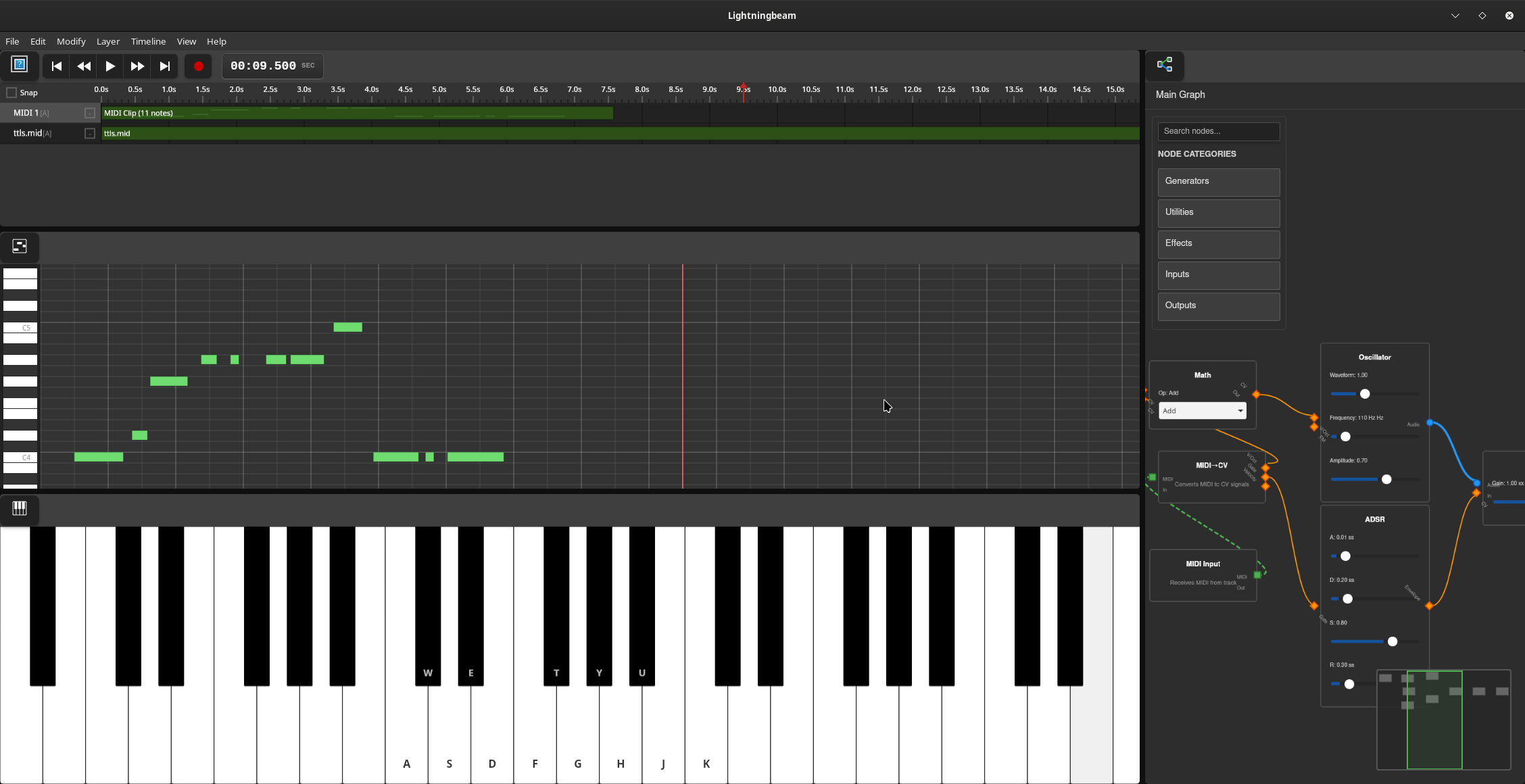

FreeJam

Another program that I had found myself missing on Linux was GarageBand. I looked for alternatives - at the time, LMMS was the only thing I could find, and it seemed to be barely functional, and had an extremely confusing UI. I decided to make my own open-source music editor, which I called FreeJam. I originally wrote it in Python, using Pyglet for rendering as well as audio. After struggling for a while to do real-time audio processing in Python I finally decided to rewrite it in C++, initially using raw OpenGL for rendering, but later moving to OpenFrameworks to handle rendering and audio. Then I stopped working on the project for a while because I got a Mac and could just use GarageBand.

Experimenting with web

I shelved Lightningbeam for several years. In 2020, I suddenly found myself stuck at home looking for things to do, and decided to return to the project. By this point, Flash was essentially dead, so I decided to refocus on HTML5 export. This removed the desktop dependency on swfc, so I found myself wondering if I could build Lightningbeam as a web app? I rewrote it and determined that...sort of? I got the basics of the UI in place, but ran into problems with file management and ran out of steam.

Around this time I also started experimenting with FreeJam on the web. I found a library called tone.js which made it much easier to work with the WebAudio API. Using it, I was able to get a web version of FreeJam to a similar place to the desktop version.

The grand rewrite

In November 2024, I had a big opportunity for Lightningbeam: an offer to pay me to get the project to the point where it could be used for an animation project. I decided this was my chance to architect it properly, so I chose to rewrite it from the ground up. I wanted to use Rust this time - between its native performance and error-checking type system it seemed a much better choice than Python for an animation program. But Rust was a relatively new language for me, and I had a deadline. However, I discovered Tauri - an Electron-like framework that lets you write the backend of your app in Rust and the front end in Javascript using web technologies, but using the system browser instead of bundling Chromium so the app wouldn't be hugely bloated. It seemed like a great strategy - prototype it in JS, then gradually move functions to the Rust backend until the whole project was ported.

Convergence

The animation in question was a music video, so I added support for audio tracks, using tone.js. This was easy because I'd already used it for FreeJam. In fact, the code I was writing was all feeling very familiar - tracks with clips on a timeline, that the user could play or seek through. What if I merged FreeJam and Lightningbeam into a single project - an integrated multimedia editor which could work with animations, audio, or both depending on the project?? I liked this idea, and I wanted to do it properly, so I replaced the tone.js audio system with a new node-based audio backend written in Rust.

Tauri's limitations

While working on this, I started also working on video editing. Conceptually it's straightforward - video layers are just another type of layer like vector layers and audio tracks, and they get drawn on the stage. But I had to actually load the video. Initially I tried ffmpeg.js, but that had severe performance limitations - most notably, it could only handle videos of limited length or it would run out of memory. Instead, I chose to use Rust ffmpeg bindings, so I could decode the video in the high-performance backend, then send the frames to the frontend to draw them.

This is where I discovered Tauri's bandwidth limitations. Sending commands to the audio engine was one thing, but sending full video frames proved to be basically impossible at any decent resolution. This is because communication between the backend and frontend with Tauri isn't just copying memory - it serializes the whole thing into JSON, encoding binary data like images as base64, then sends that and decodes it on the frontend. This is way too expensive to work for uncompressed frames. I looked into Tauri's suggested alternative, which is to essentially cut a hole in the JS window and position another window aligned behind it and draw to that with Rust (a technique called a native overlay) - but it turns out that's very platform-dependent, and in particular doesn't work on Linux/Wayland which is the environment I'm mainly working in. So...I can't mix JS and Rust for rendering. The whole gradual porting plan was a bust.

Rewrite it in Rust!

So, I chose to bite the bullet, and rewrite the whole thing - yet again! - this time entirely in Rust, no Tauri. I couldn't reuse any of the UI code, but at least I was able to reuse the audio engine since I'd written it to be a separate module that didn't depend on the frontend at all. After looking at the capabilities and maturity of the various Rust UI toolkits, I decided to use egui as its architecture fit the project better than Iced and nothing else seemed production-ready. It took me about four months to get the pure Rust version to the point that the Rust + JS version had been at, but now I am finally beginning to return to adding new features. This has also addressed a few pain points from the Tauri version that were inherent to its technology (for example, a lingering bug where dragging while updating the DOM would cause large areas of the screen to select as though they were text).

And that's caught up to the present! Stay tuned, and feel free to follow development on GitHub or Gitea.

Want to help fund development? Support Lightningbeam on Ko-fi.

Want to know when it's ready?